Remember “The Twilight Zone” episode that opens with actress Inger Stevens as a moody child of a wealthy couple who constantly complains about her unhappy life in a luxurious home full of service robots? By the end of the story, titled “The Lateness of the Hour,” Stevens’ disappointed parents decide to “reprogram” their privileged “daughter” into a maid, and in the process wipe away all of the robot’s embedded memories of “its” previous existence.

These and other productions linking science fiction and real life for millions of TV viewers over the past 60-plus years have clearly left impressions on viewers of all ages, first with fantasists, then scientists, then business people, and now the general public, all coming to a collective realization that “Artificial intelligence and robotics are the driving force of the future,” the fittingly named e-zine Analytics Insight, AI, recently declared.

These largely hand-made creatures will likely bear little resemblance to Stevens, or for that matter any other human, at least not for some time.

Still, “For better or worse, robots with humanoid features are often compared to humans — we want to know if they’re anywhere close to doing the same kinds of things that we do, and with a few exceptions, the answer is ‘probably not,’” writes Evan Ackerman of IEEE Spectrum, a publication of the Institute of Electrical and Electronics Engineers.

Nonetheless, AI reports, “In the next decade, you will surely see some stunning technological revelations based on AI. AI is all about data, and when properly implemented, it will use the given data to our benefit, automating most of the processes and making our lives easier.”

Machines and computers will “positively manage” most of our dealings, the cheerleading AI story further prophesied. And, “It is just the start. AI, machine learning, and robotics are bound to progress further in the coming years before they become commonplace.”

Science Fact

One well-known example of this type of robot technology that’s recently been interfacing with everyday folks is Flippy, the hamburger tossing robot, created by Pasadena-based Miso Robotics.

“We’ve been trained since childhood that robotics were coming in the future,” Louise Perrin told USA Today before ordering lunch one day at Caliburger, where Flippy was first put on display turning burgers in the chain’s Pasadena kitchen in 2018. Caliburger, according to its website, closed due to the pandemic, and Flippy has moved on, its managers teaming with the Kentucky-based White Castle chain, undergoing a major redesign, and becoming even more efficient and productive.

“To be a part of it, to see it and watch it happen live in front of you … is absolutely incredible,” Perrin said at the time.

Not only was Perrin and the rest of us “part of it” then, we are currently characters in a Rod Serling fantasy, if only by virtue of simply being in Pasadena, home to Caltech, NASA’s Jet Propulsion Laboratory and thriving private robotics and other related high-tech businesses like Miso Robotics.

At Caltech’s Center for Autonomous Systems and Technology, CAST, scientists, mathematicians and engineers are busy bringing dreams of Serling and others to life, not only here on Earth, but, with the help of NASA’s Jet Propulsion Laboratory which is managed by Caltech, also on the Moon, Mars, and other planets.

“The current state-of-the-art in autonomous systems is very promising on two divergent fronts,” Caltech’s Morteza “Mory” Gharib says on the agency website’s opening page. Gharib is the Hans W. Liepmann Professor of Aeronautics and Bioinspired Engineering, and director of Graduate Aerospace Laboratories at Caltech.

Vortex dynamics, active and passive flow control, nano/micro fluid dynamics, bio-inspired wind and hydro energy harvesting, and advanced (liquid) flow-imaging diagnostics are among Gharib’s many heady research interests in conventional fluid dynamics and aeronautics, according to a portion of his biography.

“The bodies, or machines and sensors, have become more and more sophisticated and capable,” Gharib recently observed in Caltech Magazine. “Meanwhile, the algorithms that collect and interpret behavior are increasingly fine-tuned. We plan to bring these two together through a series of ‘Moonshot’ challenges that we will undertake in the coming years.”

The wide-ranging Moonshot program, according to the CAST website, is organized into five separate scenarios in which robots will play key roles, according to the CAST website:

Moonshot #1: Explorers

Planetary, Underwater, and Space Explorers

- Robot mobility and teaming to navigate unknown and complex terrestrial environments

- Planetary science explorers surviving and operating remotely on land, in water, and in space

Moonshot #2: Guardians

Dynamic Event Monitors and First Responders

- Robot teams monitoring and responding to dynamic events (earthquakes, tsunamis, plumes, etc.)

- Surveying, gathering and distributing critical information, thus multiplying the effectiveness of human responders

Moonshot #3: Transformers

Swarms of Autonomous Robots Transforming Shapes and Functions

- In-space and on-surface deployment, construction, and assembly of complex structures (e.g., space telescopes, planetary habitats)

- Formation flying, self-assembly, and reconfiguration of autonomous swarms for science, observation, and communication

Moonshot #4: Transporters

Robotic Flying Ambulances and Delivery Drones

- Safe operation of flying ambulances and urban aerial mobility systems surviving all weather conditions

- Aerial delivery systems on Earth and Mars

Moonshot #5: Partners

Robots Helping and Entertaining People

- Butlers and nurses (think Inger Stevens) caring for the sick and elderly

- Entertainers and guides safely interacting with visitors

- Robots helping doctors perform surgery and improve recovery

- Assistive devices improving function, mobility, and quality of life

Large-scale machine learning and high dimensional statistics are where Aminashree Anandkumar’s interests lie. Bren Professor of Computing and Mathematical Sciences in the Division of Engineering and Applied Science at Caltech, Anandkumar works with Gharib at CAST. Her job is to develop efficient techniques to speed up optimization algorithms that underpin machine-learning systems. According to her bio, Anandkumar is presently collaborating with Bren Professor of Aerospace Soon-Jo Chung and Assistant Professor of Computing and Mathematical Sciences Yisong Yue on embodied intelligence projects.

Yue joined the Caltech faculty as an assistant professor in 2014 and became a full professor in 2020. He was previously a research scientist at Disney Research. Much like Anandkumar, Yue’s research interests lie primarily in the theory and application of statistical machine learning.

“At CAST, I’m excited about teaching robots to think,” Anandkumar said in a separate interview with the university’s campus publication.

“Let’s say you want a robot to walk in an autonomous way. Right now, you have to manually feed in the parameters for each type of terrain on which the robot is expected to walk. This is where we need reinforcement learning, which I work on, because then the robot is continuously recording the data as it is walking. And based on that, it can adapt very quickly — not just to avoid falls, but also to develop a good navigation strategy,” she explained.

“So that’s one of the immediate goals that I see: teaching robots to learn on the fly as the environment is changing, and changing their strategies accordingly,” Anandkumar said.

‘Leonardo,’ ‘Cassie’ & Friends

CAST researchers have developed a robot called the LEg ON Aerial Robotic DrOne, or Leonardo, which, according to IEEE Spectrum, combined a bipedal robot with a drone-like thruster system for balancing, agility and jumping. Leonardo is often called a flying robot with legs — a very good thing to have on hand in rough terrain.

“Longer term, the idea is that Leo will be doing a lot of jumping, with the thrusters significantly augmenting both height and distance, with a multimodal nature that will increase versatility, reliability, and endurance, IEEE Spectrum reports.

Cassie (seen here) is a bipedal robot built in 2017 by Oregon-based Agility Robotics, a spin-off company of Oregon State University, where the technology was first developed, Cassie is well balanced and can walk and run in a fashion similar to that of humans or animals. It can also handle diverse and complex terrain, making it perfect for search and rescue, or package delivery. It’s been dubbed a “Delivery Ostrich” by IEEE due to the way it walks and its ability to haul heavy objects.

Can you imagine this device delivering packages to your front door?

Agility Robotics can.

It seems the only thing that separates them from being used by the average family is the cost. Complete with controllers and teach pendants, new industrial robotics cost from $50,000 to $80,000. Once application-specific peripherals are added, the robot system costs anywhere from $100,000 to $150,000, according to robots.com.

Cassie takes its cues from a robot named Atrius, which was built well enough to actually walk but fell down a lot, presenting problems in the durability department.

“We all want the cost of manufactured goods to be significantly reduced through more efficient logistics throughout the manufacturing process. Cassie is a step in this direction: It is a first product that will initially be sold to research institutions to support a community of researchers solving the problem of locomotion in the human environment, and Cassie will continue to improve and evolve, as Agility Robotics focuses on products and commercial customers,” wrote company co-founder and OSU professor Jonathan Hurst.

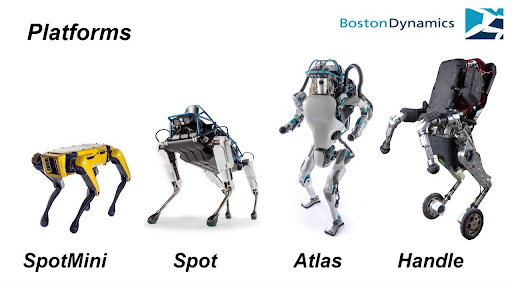

Much like Agility Robotics’ relationship to Oregon State University, Boston Dynamics is an offshoot of the Massachusetts Institute of Technology (MIT) and is another of a handful of companies working to develop and improve multipedial and humanoid-like robot technology. The company was founded in 1992 and has been owned by the Hyundai Motor Group since 2020.

“For better or worse,” writes Ackerman at IEEE Spectrum, “robots with humanoid features are often compared to humans (or other biomimics) — we want to know if they’re anywhere close to doing the same kinds of things that we do, and with a few exceptions, the answer is probably not.

“Humanoid robots are difficult to build and program, but we keep doing it because it makes some amount of sense to have robots that look and function like we do operating in the same environments that we operate in.

“However,” Ackermam wrote, “one of the great things about robots is that they don’t have to be constrained by the same boring humanoid-ness that we are, and we can do all kinds of things to them to make them more capable than we’ll ever be.”